금융을 따라 흐르는 블로그

1-1 본문

import SwiftUI

import AVFoundation

import Speech

import NaturalLanguage

internal import Combine

// MARK: - 1. 모델

struct TranscriptWord: Identifiable {

let id = UUID()

let text: String

let startTime: TimeInterval

let duration: TimeInterval

var importance: Double = 0

}

struct RecordingFile: Identifiable {

let id = UUID()

let url: URL

let createdAt: Date

}

// MARK: - 2. 매니저

class VoiceMemoManager: NSObject, ObservableObject, AVAudioPlayerDelegate {

// 상태

@Published var isRecording: Bool = false

@Published var transcriptWords: [TranscriptWord] = []

@Published var recordings: [RecordingFile] = []

@Published var searchText: String = ""

@Published var currentPlaybackTime: TimeInterval = 0

// 오디오

private let audioEngine = AVAudioEngine()

private var audioPlayer: AVAudioPlayer?

private var audioFile: AVAudioFile? // 수정됨: 오디오 저장을 위한 파일 객체 추가

private var recordingURL: URL?

// STT

private let speechRecognizer = SFSpeechRecognizer(locale: Locale(identifier: "ko-KR"))

private var recognitionRequest: SFSpeechAudioBufferRecognitionRequest?

private var recognitionTask: SFSpeechRecognitionTask?

private var playbackTimer: Timer?

// MARK: 초기화

override init() {

super.init()

requestPermissions()

loadRecordings()

}

// MARK: 권한

func requestPermissions() {

AVAudioSession.sharedInstance().requestRecordPermission { _ in }

SFSpeechRecognizer.requestAuthorization { _ in }

}

// MARK: 녹음 시작

func startRecording() {

transcriptWords.removeAll()

recognitionTask?.cancel()

recognitionTask = nil

// 수정됨: 녹음과 재생이 모두 가능하고 스피커로 나오도록 설정

let audioSession = AVAudioSession.sharedInstance()

try? audioSession.setCategory(.playAndRecord, mode: .default, options: .defaultToSpeaker)

try? audioSession.setActive(true)

recognitionRequest = SFSpeechAudioBufferRecognitionRequest()

guard let recognitionRequest = recognitionRequest else { return }

let inputNode = audioEngine.inputNode

let format = inputNode.outputFormat(forBus: 0)

// 수정됨: 파일 생성 및 AVAudioFile 초기화 (버퍼 저장용)

createNewRecordingFile()

guard let url = recordingURL else { return }

do {

audioFile = try AVAudioFile(forWriting: url, settings: format.settings)

} catch {

print("파일 쓰기 준비 실패: \(error)")

}

inputNode.installTap(onBus: 0, bufferSize: 1024, format: format) { [weak self] buffer, _ in

guard let self = self else { return }

self.recognitionRequest?.append(buffer)

// 수정됨: 마이크로 들어온 소리를 실제 파일로 저장

do {

try self.audioFile?.write(from: buffer)

} catch {

print("오디오 버퍼 저장 실패: \(error)")

}

}

audioEngine.prepare()

try? audioEngine.start()

recognitionTask = speechRecognizer?.recognitionTask(with: recognitionRequest) { [weak self] result, error in

guard let self = self else { return }

if let result = result {

self.processTranscription(result)

}

if error != nil {

self.stopRecording()

}

}

isRecording = true

}

// MARK: 녹음 종료

func stopRecording() {

audioEngine.stop()

audioEngine.inputNode.removeTap(onBus: 0)

recognitionRequest?.endAudio()

recognitionTask?.cancel()

audioFile = nil // 파일 닫기 및 저장 완료

try? AVAudioSession.sharedInstance().setActive(false)

isRecording = false

loadRecordings() // 목록 갱신 추가

if let url = recordingURL {

transcribeFromFile(url) // 최종본 STT 재확인

}

}

// MARK: 새 파일 생성

private func createNewRecordingFile() {

let doc = FileManager.default.urls(for: .documentDirectory, in: .userDomainMask)[0]

let formatter = DateFormatter()

formatter.dateFormat = "yyyyMMdd_HHmmss"

// 수정됨: AVAudioFile과의 호환성을 위해 .caf 확장자 사용 권장

let filename = "Recording_\(formatter.string(from: Date())).caf"

recordingURL = doc.appendingPathComponent(filename)

}

// MARK: 파일 기반 STT

private func transcribeFromFile(_ url: URL) {

recognitionTask?.cancel()

let request = SFSpeechURLRecognitionRequest(url: url)

recognitionTask = speechRecognizer?.recognitionTask(with: request) { [weak self] result, _ in

guard let self = self else { return }

if let result = result, result.isFinal {

self.processTranscription(result)

}

}

}

// MARK: 전사 처리

private func processTranscription(_ result: SFSpeechRecognitionResult) {

var words: [TranscriptWord] = []

for segment in result.bestTranscription.segments {

words.append(

TranscriptWord(

text: segment.substring,

startTime: segment.timestamp,

duration: segment.duration

)

)

}

calculateImportance(&words)

DispatchQueue.main.async {

self.transcriptWords = words

}

}

// MARK: TF 기반 중요도 계산

private func calculateImportance(_ words: inout [TranscriptWord]) {

var freq: [String: Int] = [:]

for w in words {

freq[w.text, default: 0] += 1

}

let maxFreq = freq.values.max() ?? 1

for i in words.indices {

let f = freq[words[i].text] ?? 0

words[i].importance = Double(f) / Double(maxFreq)

}

}

// MARK: 파일 리스트 로드

func loadRecordings() {

let doc = FileManager.default.urls(for: .documentDirectory, in: .userDomainMask)[0]

let files = try? FileManager.default.contentsOfDirectory(at: doc, includingPropertiesForKeys: [.creationDateKey])

recordings = files?.compactMap { url in

// 오디오 파일만 불러오도록 필터링

guard url.pathExtension == "caf" || url.pathExtension == "m4a" else { return nil }

let attr = try? url.resourceValues(forKeys: [.creationDateKey])

return RecordingFile(url: url, createdAt: attr?.creationDate ?? Date())

}.sorted { $0.createdAt > $1.createdAt } ?? []

}

// MARK: 파일 삭제

func deleteRecording(_ recording: RecordingFile) {

try? FileManager.default.removeItem(at: recording.url)

loadRecordings()

}

// MARK: 재생

func play(_ url: URL) {

do {

// 재생 시에도 스피커로 나오게 세팅 확인

try AVAudioSession.sharedInstance().setCategory(.playAndRecord, mode: .default, options: .defaultToSpeaker)

try AVAudioSession.sharedInstance().setActive(true)

audioPlayer = try AVAudioPlayer(contentsOf: url)

audioPlayer?.delegate = self

audioPlayer?.play()

startTracking()

} catch {

print("재생 실패: \(error)")

}

}

private func startTracking() {

playbackTimer?.invalidate()

playbackTimer = Timer.scheduledTimer(withTimeInterval: 0.05, repeats: true) { [weak self] _ in

// 수정됨: UI 상태 업데이트는 메인 스레드에서 실행

DispatchQueue.main.async {

self?.currentPlaybackTime = self?.audioPlayer?.currentTime ?? 0

}

}

}

// MARK: 검색 필터

var filteredWords: [TranscriptWord] {

if searchText.isEmpty { return transcriptWords }

return transcriptWords.filter {

$0.text.localizedCaseInsensitiveContains(searchText)

}

}

}

// MARK: - 3. UI

struct ContentView: View {

@StateObject private var manager = VoiceMemoManager()

var body: some View {

NavigationView {

VStack {

// 검색창

TextField("키워드 검색", text: $manager.searchText)

.textFieldStyle(.roundedBorder)

.padding()

// 전사 표시

ScrollView {

WrapWordsView(words: manager.filteredWords,

currentTime: manager.currentPlaybackTime)

}

.frame(maxHeight: 300)

Divider()

// 파일 리스트

List {

ForEach(manager.recordings, id: \.url) { recording in

HStack {

Text(recording.createdAt.formatted(date: .numeric, time: .standard))

.font(.caption)

Spacer()

Button("재생") {

manager.play(recording.url)

}

.buttonStyle(.bordered)

}

}

.onDelete { indexSet in

indexSet.map { manager.recordings[$0] }

.forEach(manager.deleteRecording)

}

}

// 녹음 버튼

Button(action: {

manager.isRecording ? manager.stopRecording() : manager.startRecording()

}) {

Circle()

.fill(manager.isRecording ? Color.red : Color.blue)

.frame(width: 70, height: 70)

.overlay(

Image(systemName: manager.isRecording ? "stop.fill" : "mic.fill")

.foregroundColor(.white)

.font(.title)

)

}

.padding()

}

.navigationTitle("Smart Voice Notes")

}

}

}

// MARK: - 4. 단어 Wrap 뷰

struct WrapWordsView: View {

let words: [TranscriptWord]

let currentTime: TimeInterval

var body: some View {

FlowLayout {

ForEach(words) { word in

Text(word.text)

.padding(4)

.background(

// 수정됨: 재생 중인 구간 판별 수학 공식 교정

(currentTime >= word.startTime && currentTime <= (word.startTime + word.duration))

? Color.green.opacity(0.6)

: word.importance > 0.5

? Color.yellow.opacity(0.5)

: Color.clear

)

.cornerRadius(4)

}

}

.padding()

}

}

// MARK: - 5. 커스텀 Flow Layout (수정됨)

struct FlowLayout: Layout {

func sizeThatFits(proposal: ProposedViewSize, subviews: Subviews, cache: inout ()) -> CGSize {

// 수정됨: 하드코딩된 300 높이 대신 서브뷰의 내용물에 맞춰 실제 필요한 높이 계산

let width = proposal.width ?? UIScreen.main.bounds.width

var x: CGFloat = 0

var y: CGFloat = 0

var rowHeight: CGFloat = 0

let spacing: CGFloat = 8

for subview in subviews {

let size = subview.sizeThatFits(.unspecified)

if x + size.width > width {

x = 0

y += rowHeight + spacing

rowHeight = 0

}

x += size.width + spacing

rowHeight = max(rowHeight, size.height)

}

return CGSize(width: width, height: y + rowHeight)

}

func placeSubviews(in bounds: CGRect, proposal: ProposedViewSize, subviews: Subviews, cache: inout ()) {

var x: CGFloat = 0

var y: CGFloat = 0

let spacing: CGFloat = 8

for subview in subviews {

let size = subview.sizeThatFits(.unspecified)

if x + size.width > bounds.width {

x = 0

y += size.height + spacing

}

// 수정됨: 부모 뷰의 시작점(bounds.minX, minY)을 더해주어야 정상적인 위치에 그려짐

subview.place(at: CGPoint(x: bounds.minX + x, y: bounds.minY + y), proposal: .unspecified)

x += size.width + spacing

}

}

}

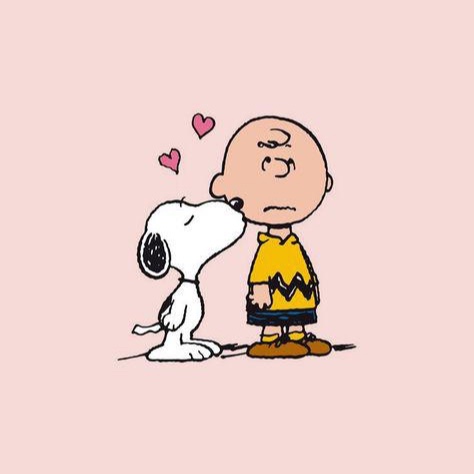

해당 코드로 작성해서 앱을 실행해봤는데 사진같이 나와. 내가 원하는 기능을 지금 전혀 사용하고 있지 못하는 상태야. 일반적인 앱들처럼 상호작용 가능하게 만들어줘